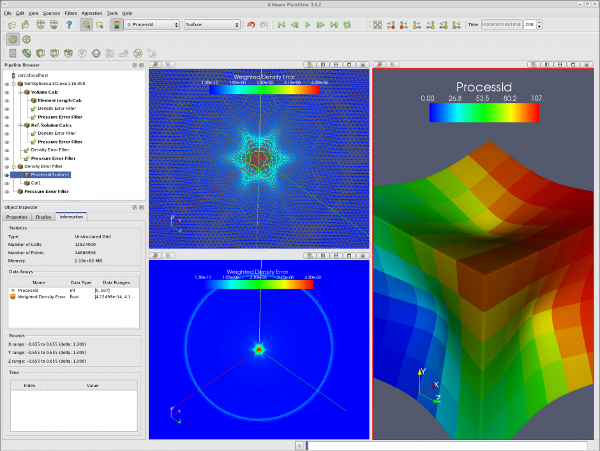

Hawk-login04$ pvserver -server-port=22222Īccepting connection(s): hawk-login04:22222įrom local paraview client: ->File->connection->add Server-> Server Type:Client/Server → Host:localhost → Port:11111 save.Īt the time I click connect, from the cluster it shows: Waiting for client.ħ 0x7fd157a3dc21 vtkTCPNetworkAccessManager::ProcessEventsInternal(unsigned long, bool) + 609Ħ 0x7fd156976d99 vtkMultiProcessController::ProcessRMIs(int, int) + 729ĥ 0x7fd156976580 vtkMultiProcessController::ProcessRMI(int, void*, int, int) + 272Ĥ 0x7fd15823972d vtkPVSessionServer::OnClientServerMessageRMI(void*, int) + 1101ģ 0x7fd1582392aa vtkPVSessionServer::GatherInformationInternal(unsigned int, char const*, unsigned int, vtkMultiProcessStream&) + 714Ģ 0x7fd1579ebf8f vtkPVDataInformation::CopyParametersFromStream(vtkMultiProcessStream&) + 111ġ 0x7fd15697c130 vtkMultiProcessStream::operator>(std::string&) + 224Ġ 0x7fd159eef880 /lib64/libc.so. Step 1: $ ssh I could get the login node:hawk-login04)įrom local shell: $ ssh -L 11111:hawk-login04:22222 I could connect successfully)įrom cluster: hawk-login04$ module load paraview/server/(version) I donot know what is wrong with it? If anyone could give me suggestions, I appreciate. it shows client connected, but one second later it gives the error: Segmentation fault(core dumped). High performance on the Windows operating system. Security based on Active Directory Domain Services. MS-MPI offers several benefits: Ease of porting existing code that uses MPICH. In order to make the installation of the cluster easier, I decided to use the Rocks cluster kit, in order to have the basic cluster up and running as quickly as possible (although whether you use other cluster kit or even build the basic cluster manually should not matter much for the installation of ParaView).Everyone! when I try the paraview 5.9.1 remote connection between cluster(server) and win10(client GUI). Microsoft MPI (MS-MPI) is a Microsoft implementation of the Message Passing Interface standard for developing and running parallel applications on the Windows platform. It is only a procedure for testing - it does not explain how to actually do the connection. eth1 is connected to the outside world, while eth0 (as per the other nodes) is connected to the switch. This page contains instructions on how to test ParaView running on a remote server or cluster. This document is designed to help get you started with build and setting up your own ParaView server. In this way, users can have the full advantage of using a shared remote high-performance rendering cluster without leaving their offices. Our test cluster is made up of four x86_64 nodes (each with four CPUs) connected via a Gigabit switch, and the frontend has two network cards. ParaView is designed to work well in client/server mode. Certainly, some issues (like X connections) seem to be a common source of errors, and the way we solved it can probably be useful to others. An example is the use of ParaView in a client/server mode, where a server deploys in a visualization cluster. ParaView handles large data sets and efficiently integrates with a parallel/cluster-type environment. Some of the decisions taken for the installation of our cluster reflect the type of cluster that we wanted to have, and therefore the guide is certainly not comprehensive, but I put it here hoping that it can be useful to someone else. ParaView is designed from the ground up to run efficiently on large parallel distributed- memory clusters computers, and our current usage of ParaView has. The SALOME application integrates ParaView visualization capabilities in the form of a dedicated module. I'm sure many things could be done better, and I would appreciate any comments on how to improve things. HOWTO run ParaView on the EPFL Central Facilities click File -> connect Add server: enter the name of the cluster (for example ) and choose. In client/server mode, a ParaView server runs on the biowulf cluster and an interactive ParaView client. For production usage, ParaView Visualizer should be deployed within your Web infrastructure following the proper requirements: 1) Serve the Visualizer application to the client (Static content: JS + HTML) using any kind of Web server (Apache, Nginx, Tomcat, Node). Paraview is an open-source toolkit for visualizing scientific data and is capable of leveraging clusters of compute instances for rendering large datasets. Since the ParaView documentation didn't seem to have a step-by-step guide on how to do it, I put together the notes of what I did. In Destop mode, Paraview is run locally on your machine. The Research Computing Cluster (RCC) offers OpenFOAM and Paraview through virtual machine images that can be easily incorporated into an existing RCC. As part of a student project that I was mentoring, I built a small ParaView server (4 nodes, 16 CPUs).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed